From Intelligent Agent to Deterministic Orchestration – Designing AI Workflows with Structural Control

A deep dive into designing a deterministic orchestration layer for agentic AI using AWS Step Functions. This post explores how to combine probabilistic LLM planning with structured execution boundaries, human-in-the-loop governance, and production-aware workflow design.

AI & INTELLIGENT SYSTEMSARCHITECTURE & ORCHESTRATIONENTERPRISE SYSTEMS

Nagaraj Basarkod

2/24/20262 min read

Most discussions around agentic AI focus on model capabilities reasoning, tool calling, or multi-agent frameworks. Far fewer explore what happens after the model decides to act. How do you translate probabilistic reasoning into deterministic execution? How do you handle tool failures, approvals, and business constraints without losing control of the workflow?

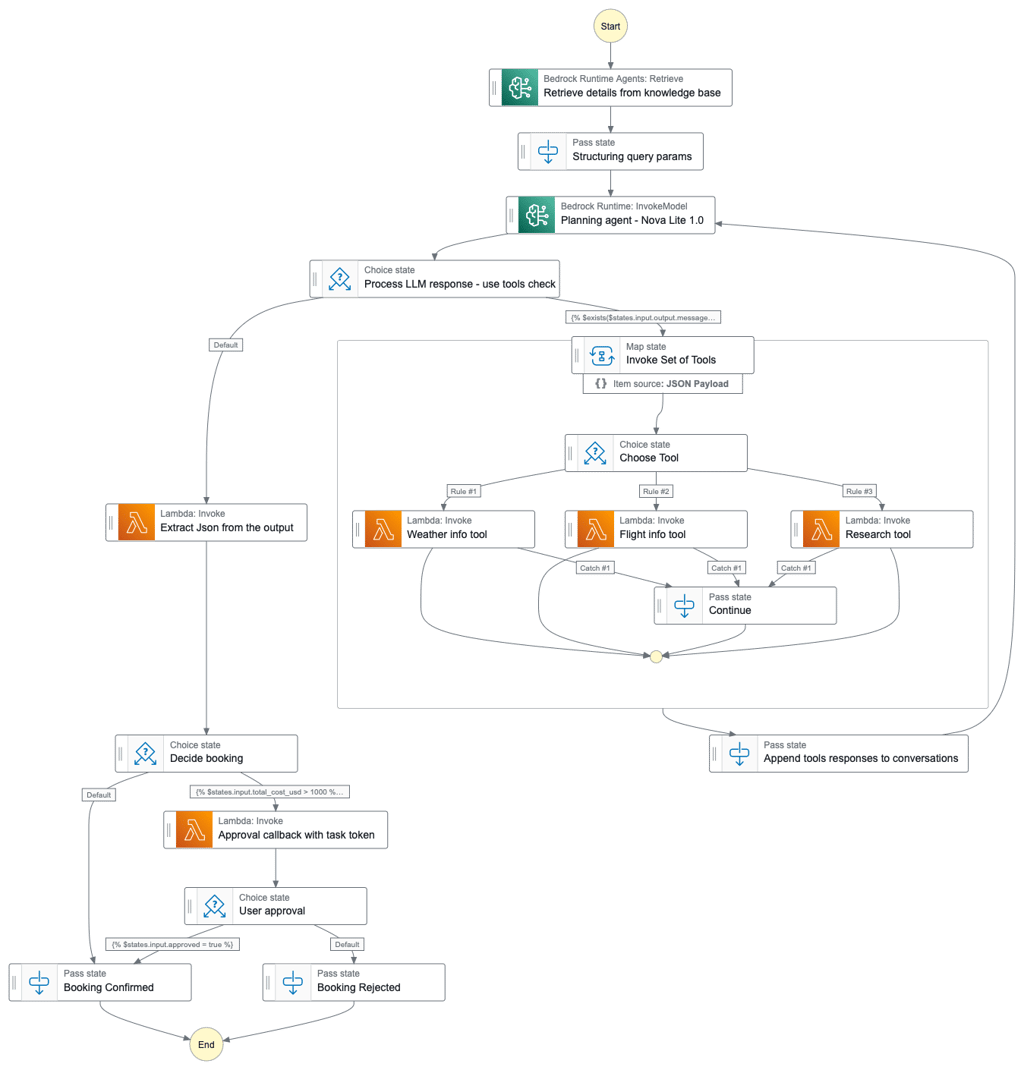

In this prototype, I designed and implemented an end-to-end agentic orchestration layer using AWS Step Functions and Bedrock. The system demonstrates how LLM planning can be combined with structured state transitions, tool routing, human-in-the-loop approvals, and deterministic decision boundaries.

This is not a production system. It is a working internal prototype built to explore how agentic systems can move from experimentation to structured, observable execution.

The Core Shift: Intelligence vs Structure

Orchestration is not new. Enterprise systems have long relied on workflow engines to coordinate business logic. What changes with AI is not the need for orchestration, but the presence of a probabilistic reasoning engine inside that workflow.

LLMs operate in probability space. Business systems operate in constraint space.

The challenge is combining both without allowing one to undermine the other.

Architecture Overview

The system separates cognitive intelligence from structural execution:

LLM (Amazon Nova Lite via Bedrock) for reasoning and tool selection

AWS Step Functions as the orchestration spine

Lambda functions as deterministic tool executors

Knowledge Base (RAG) for contextual grounding

DynamoDB + Callback pattern for human-in-the-loop approvals

API Gateway for resuming execution

The LLM plans. The state machine governs.

Below is the actual Step Functions state machine used in the prototype. The orchestration structure enforces deterministic execution boundaries while the LLM handles reasoning.

Execution Flow

User submits a travel query.

RAG retrievereplayabilityntextual information from the knowledge base.

The planning agent (LLM) reasons about required actions.

If tool calls are required, execution transitions into a Map state.

Individual Lambda functions execute deterministic tool logic (weather, research, flights).

Tool outputs are appended to the execution context.

The planner re-evaluates with enriched context.

Budget constraints are evaluated.

If cost exceeds threshold, a task-token-based approval workflow is triggered.

Based on user approval or rejection, execution completes deterministically.

Instead of recursive reasoning inside the model, each decision becomes an explicit state transition. This preserves transparency, observability, and replayability.

Why Orchestration Matters

1. Structural Boundary

The model proposes actions. The state machine enforces rules.

Approval thresholds, budget limits, and execution paths are deterministic. They are not left to model interpretation.

2. Governance

Service-level expectations, financial constraints, and compliance boundaries cannot rely on probabilistic reasoning. Human-in-the-loop gating ensures that high-impact actions remain controlled.

3. Observability

Agents abstract complexity. State machines expose it.

Each transition is visible. Each failure is traceable. Each tool invocation is auditable. This transforms AI workflows from opaque reasoning chains into inspectable system behavior.

4. Resilience

When an agent fails internally, the system state can become ambiguous. Orchestration introduces structured failure handling, retry paths, and controlled termination states. Determinism becomes a design principle.

Key Insight: Loop-Based Planning Under Control

The most important design decision was externalizing tool calls.

Rather than allowing the model to recursively reason internally, every tool call becomes a state transition. The results are appended to context and fed back to the planner.

This creates:

Controlled iteration

Explicit execution boundaries

Clear separation between reasoning and action

The LLM remains intelligent - but not sovereign.

Limitations & Next Steps

This prototype has constraints:

Memory is scoped to individual executions.

Tool error handling can be extended with retries and circuit breakers.

Cost observability and token budgeting require refinement.

Persistent conversational state would require durable storage.

Multi-agent specialization could improve modular reasoning.

The goal was not completeness.The goal was structural clarity.

My Takeaways

Intelligence without orchestration is incomplete.

Deterministic systems require explicit structural boundaries.

Human-in-the-loop is a governance mechanism, not an add-on.

LLMs are cognitive nodes within larger systems—not the system itself.

The differentiator is not the model. It is the system you build around it.

Contact

+91 - 9738482563

© 2025. All rights reserved.

nagaraj.basarkod@yahoo.in